Content Moderation Jobs: Exclusive Remote Platform Work and Online Safety Roles

Content moderation jobs have become an essential part of the digital ecosystem, especially as social media, online marketplaces, and user-generated platforms continue to grow exponentially. These roles play a crucial function in maintaining the integrity, safety, and overall quality of online environments. With the rise of remote work opportunities, content moderation has evolved into a viable career path offering flexibility, unique challenges, and the chance to contribute to safer internet spaces.

What Are Content Moderation Jobs?

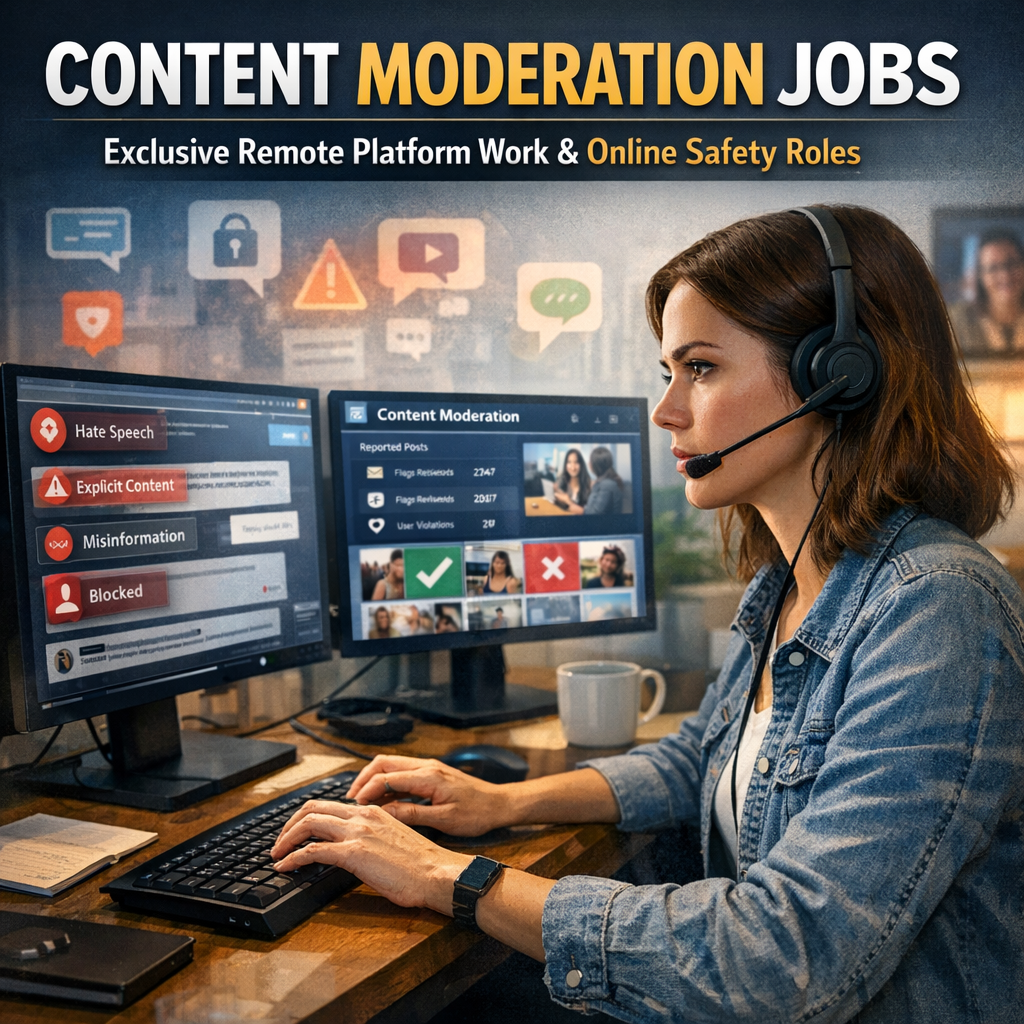

Content moderation jobs involve reviewing, monitoring, and managing user-generated content on various websites and platforms. Moderators ensure that posts, comments, images, and videos comply with community guidelines and legal standards. These jobs seek to filter out harmful materials such as hate speech, spam, misinformation, graphic violence, and inappropriate content, protecting users and fostering positive online interactions.

The growth in social media users and digital content has propelled demand for skilled moderators, especially those who can work remotely. Major companies, startups, and specialized firms increasingly rely on moderators to safeguard their platforms from harmful or disruptive content.

The Rise of Remote Platform Work in Content Moderation

Remote platform work has transformed many industries, and content moderation is no exception. Thanks to advancements in communication technology and cloud-based tools, many moderation roles are fully remote, allowing professionals to work from anywhere in the world. This shift benefits both employers, who gain access to a broader talent pool, and employees, who enjoy greater flexibility and work-life balance.

Remote content moderation jobs typically involve the same responsibilities as on-site roles, including vetting user content, responding to reports, maintaining community guidelines, and sometimes communicating with users. Organizations may hire moderators on a full-time, part-time, or freelance basis, depending on the volume of content and specific platform needs.

Key Responsibilities in Online Safety Roles

Among content moderation jobs, online safety roles are particularly critical and specialized. These positions emphasize protecting vulnerable users from harassment, cyberbullying, exploitation, and harmful content. Online safety specialists often work closely with legal teams, privacy officers, and trust-and-safety departments to formulate policies and respond to incidents.

Typical responsibilities in online safety roles include:

– Screening content for signs of abuse, threats, or illegal activity

– Escalating severe violations to law enforcement or legal authorities

– Developing and updating content policies to address emerging risks

– Educating community members on safe online behaviors

– Collaborating with platform engineers to implement safety features like filters and reporting tools

The role requires a high level of emotional intelligence, discretion, and adherence to ethical guidelines. Moderators often need resilience as they may be exposed to disturbing or sensitive materials during their work.

Skills and Qualifications for Content Moderation Jobs

Success in content moderation and online safety roles hinges on several key skills:

– Attention to Detail: Moderators must accurately identify inappropriate content amid vast streams of information.

– Critical Judgment: Making decisions that affect user experience requires good judgment and understanding of platform policies.

– Communication: Clear reporting and, at times, user interaction are essential.

– Technical Proficiency: Familiarity with content management systems, moderation tools, and online community platforms is important.

– Emotional Resilience: Handling exposure to challenging and sometimes distressing content requires mental fortitude.

– Cultural Competency: Understanding cultural nuances helps moderators assess content fairly across diverse user bases.

While some positions require a bachelor’s degree in communications, law, psychology, or related fields, others prioritize experience and training in moderation tools and protocols.

Opportunities and Challenges in Content Moderation Jobs

The demand for content moderation jobs continues to grow with the global expansion of digital communication. Remote platform work has opened doors to opportunities for people worldwide, including those seeking part-time or flexible employment.

However, the work is not without challenges. Moderators often deal with repetitive tasks, high-pressure situations, and exposure to graphic or harmful materials. Employers are increasingly recognizing the need to provide psychological support and periodic breaks to maintain moderators’ well-being.

How to Find Exclusive Remote Content Moderation Jobs

For those interested in pursuing careers in this field, several strategies can help secure remote platform work:

– Job Boards: Websites like Indeed, Glassdoor, and LinkedIn frequently list content moderation positions.

– Specialized Hiring Platforms: Companies focusing on remote and gig roles, such as Lionbridge and Appen, often recruit moderators.

– Networking: Engaging with online communities dedicated to digital safety and moderation can reveal job leads and insider tips.

– Direct Company Applications: Major tech firms and social media platforms sometimes hire moderators directly via their career portals.

Tailoring your resume to highlight relevant skills, undergoing training in content moderation tools, and preparing for interviews by familiarizing yourself with various content policies can greatly improve your chances.

Conclusion

Content moderation jobs represent a vital component of the digital age, protecting users and maintaining trustworthy platforms. With the expansion of remote platform work, these roles have become more accessible and attractive for a wide range of professionals. For individuals passionate about online safety and community well-being, pursuing roles in content moderation and online safety can be both rewarding and impactful. As companies continue to invest in safer online experiences, the demand for diligent, skilled moderators is poised to grow, offering meaningful employment opportunities across the globe.